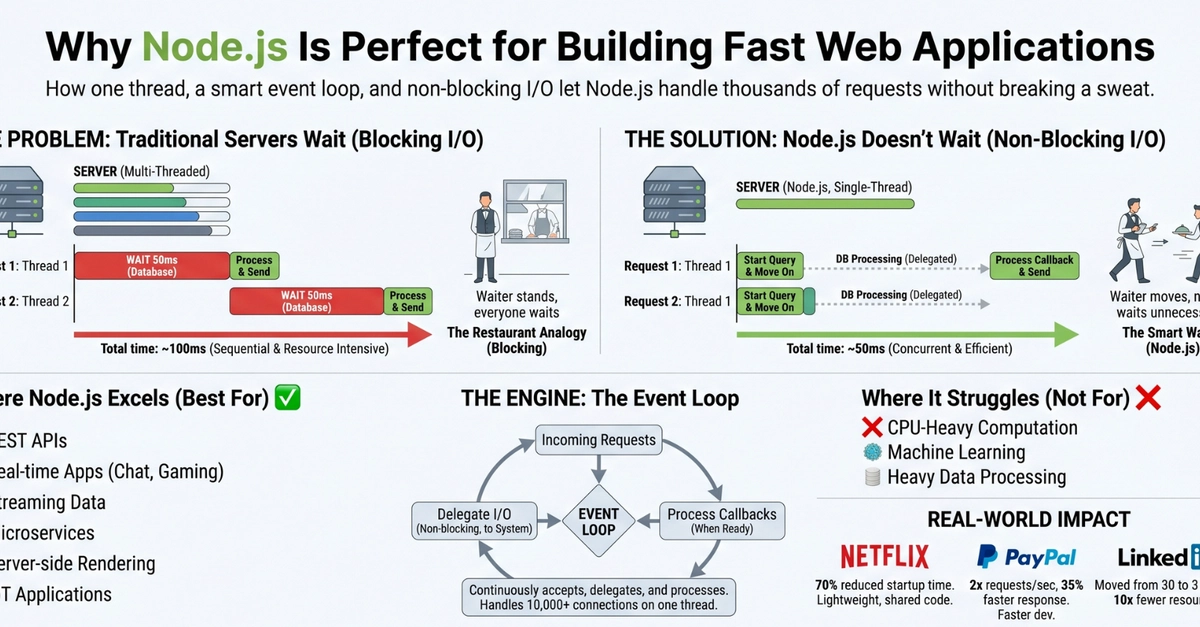

Here’s a number that should make you pause: 200 milliseconds. That’s an eternity in computer time, especially when your server is just sitting there, twiddling its digital thumbs, waiting for a database query or a third-party API to spit back some data. Yet, for traditional, blocking web servers, this is the norm. They waste precious resources simply waiting. Node.js, on the other hand, looks at this waiting game and scoffs.

For two decades now, I’ve watched Silicon Valley churn out buzzwords faster than I can drink coffee, but the fundamental challenge of building responsive web applications – dealing with I/O – hasn’t changed. And Node.js, despite all the hype it’s generated over the years, actually has a pretty solid answer to that challenge.

Who’s Actually Making Money Here?

Companies like Netflix, PayPal, and LinkedIn didn’t adopt Node.js on a whim. They saw dollars and cents in performance improvements, and that’s the only metric that truly matters when you’re running services at scale. The underlying architecture, which is deceptively simple when you strip away the marketing fluff, is what enables this. It boils down to three interconnected ideas:

- Non-blocking I/O: Don’t wait around for slow operations.

- Event-driven architecture: React when things are ready.

- Single-threaded event loop: Handle many connections with just one thread.

These aren’t abstract concepts for academics; they are the pragmatic engineering choices that define Node.js’s performance.

The Tyranny of Waiting

I/O, or Input/Output, is the server’s way of talking to the outside world. Databases, file systems, external APIs – these are all I/O operations. And they are inherently slow. A database query can easily take 50 milliseconds. An API call? Double or triple that, no sweat. Your code might be blazingly fast, but it’s tethered to the glacial pace of physical hardware and network latencies.

In a blocking model, this is a disaster. Imagine a single chef in a kitchen. A customer orders. The chef starts cooking, and then just stands there, staring at the pot, until it’s done. While they’re waiting, another customer could be served, but they can’t – the chef is busy waiting. That’s a blocking server.

Request 1: "Get user data from database"

→ Start query → WAIT 50ms → Got data → Send response

(Thread is FROZEN for 50ms doing NOTHING)

Request 2: "Get product list from database"

→ Can't start — thread is busy waiting for Request 1!

→ WAIT until Request 1 finishes

→ Start query → WAIT 50ms → Got data → Send response

Total time: ~100ms for 2 requests (sequential)

Now, picture Node.js’s approach. It’s like that same chef, but instead of just staring at the pot, they put the order in, hand it off to a sous chef (the underlying system), and immediately start taking the next order. When the sous chef yells, “Your dish is ready!”, the main chef goes and plates it. They delegate the waiting and are always available for new tasks.

Request 1: "Get user data from database"

→ Start query → Don't wait! Move on immediately

Request 2: "Get product list from database" (starts RIGHT AWAY)

→ Start query → Don't wait! Move on immediately

...50ms later...

Database response 1 arrives → callback handles it → Send response 1

Database response 2 arrives → callback handles it → Send response 2

Total time: ~50ms for 2 requests (concurrent!)

This is the fundamental difference that translates into tangible performance gains. Node.js isn’t trying to win a race in raw computational speed (JavaScript will never outrun C++ there), but it’s mastered the art of efficient multitasking in an I/O-bound world.

The Event Loop: A Symphony of Responsiveness

Node.js achieves this non-blocking magic through its event-driven architecture and the famous event loop. When an I/O operation is initiated, Node.js doesn’t spin its wheels. It registers the request and moves on to the next task. When that operation eventually completes, it doesn’t interrupt the current flow; instead, it emits an event. A pre-defined callback function then picks up the result and processes it. It’s a clean, asynchronous dance that keeps the server humming.

Take this simple file read example:

const fs = require("fs");

console.log("1. Starting file read...");

// Non-blocking! Node.js doesn't wait here.

fs.readFile("data.txt", "utf8", (error, content) => {

// This callback fires when the file is READY

console.log("3. File content:", content);

});

console.log("2. Continuing with other work...");

The output tells the story:

1. Starting file read...

2. Continuing with other work...

3. File content: (actual file contents here)

Node.js started the file read, immediately printed “Continuing with other work…”, and only then, once the file was actually read, executed the callback to print the content. It’s a paradigm shift from the synchronous, step-by-step execution that many developers are accustomed to. The server remains responsive because it’s never truly idle; it’s always ready to pick up a new task or handle a completed one.

This event loop is the heart of Node.js. It’s a single thread, yes, but it’s incredibly efficient at managing these asynchronous operations. It’s not about brute force; it’s about intelligent orchestration.

Is Node.js Always the Answer?

Look, I’ve seen enough tech cycles to know that no single technology is a panacea. Node.js excels at I/O-bound tasks – handling tons of concurrent network requests, building APIs, and real-time applications. If your application is CPU-bound, meaning it’s constantly doing heavy computation (like complex mathematical simulations or image processing), then Node.js might not be the most efficient choice. For those tasks, you’d typically want a language that can use multiple CPU cores more directly, like Go or even good ol’ C++.

But for the vast majority of web applications out there, where the bottleneck is network latency and waiting for external services, Node.js’s architectural strengths shine. It’s a pragmatic choice for developers who want to build fast, scalable applications without overcomplicating their infrastructure. The real magic isn’t in the language itself, but in how it orchestrates the execution, turning potential bottlenecks into opportunities for concurrency.

And let’s be honest, in the end, it’s about building things that users actually want to use, and that often comes down to speed and responsiveness. Node.js delivers that.