Look, the hype train for AI agents has been chugging along for a while, promising to automate everything from writing code to managing your calendar. Most of it, though, has been confined to the digital realm of APIs, CLIs, and well-defined code structures. We’ve seen projects try to orchestrate workflows, sure, but the idea of an AI truly using an application—the messy, visual, unpredictable way a human does—felt like a distant dream. Until now. ByteDance’s UI-TARS-Desktop drops into this landscape, and it’s forcing a pivot.

Everyone expected agents to get smarter at writing software or calling existing services. What they didn’t necessarily anticipate was agents learning to operate existing software interfaces, the kind you and I wrestle with daily on Windows, macOS, or even just our web browsers. UI-TARS-Desktop isn’t just another AI tool; it’s a direct challenge to the premise of how we thought AI agents would interact with the world.

AI That Actually Sees Your Screen

At its core, UI-TARS-Desktop is a multimodal GUI agent stack. That’s a mouthful, I know, but the critical part is “multimodal GUI.” What does that mean in plain English? It means the AI can “see” your screen, understand what’s displayed—buttons, text fields, menus, the whole shebang—and then, crucially, act on it. Think of it like this: instead of needing a programmer to write specific instructions for an app, you tell the AI, “Open this report, find the total sales, and email it to accounting.” And it just… does it.

This is where it diverges from your standard Robotic Process Automation (RPA) tools. Those things are brittle. They rely on hardcoded element IDs or pixel coordinates. A tiny UI update, a font change, and your entire RPA script breaks. UI-TARS, on the other hand, is supposed to understand the semantics. It’s trained to recognize what a “Save button” looks like, regardless of its exact position or styling. It grasps the intent of UI elements. That’s a leap.

UI-TARS-Desktop solves a fundamental problem: how can an AI agent interact with any software without requiring that software to provide an API or plugin support?

This is the holy grail for automating legacy systems or bespoke enterprise software that never had the foresight (or budget) for API development. You’ve got that ancient internal tool, spitting out data that needs manual entry into some other system? Forget writing complex middleware. The UI-TARS approach is to train an AI to be your new hire, learning the ropes by watching the screen.

Agent TARS vs. UI-TARS Desktop: The Dynamic Duo

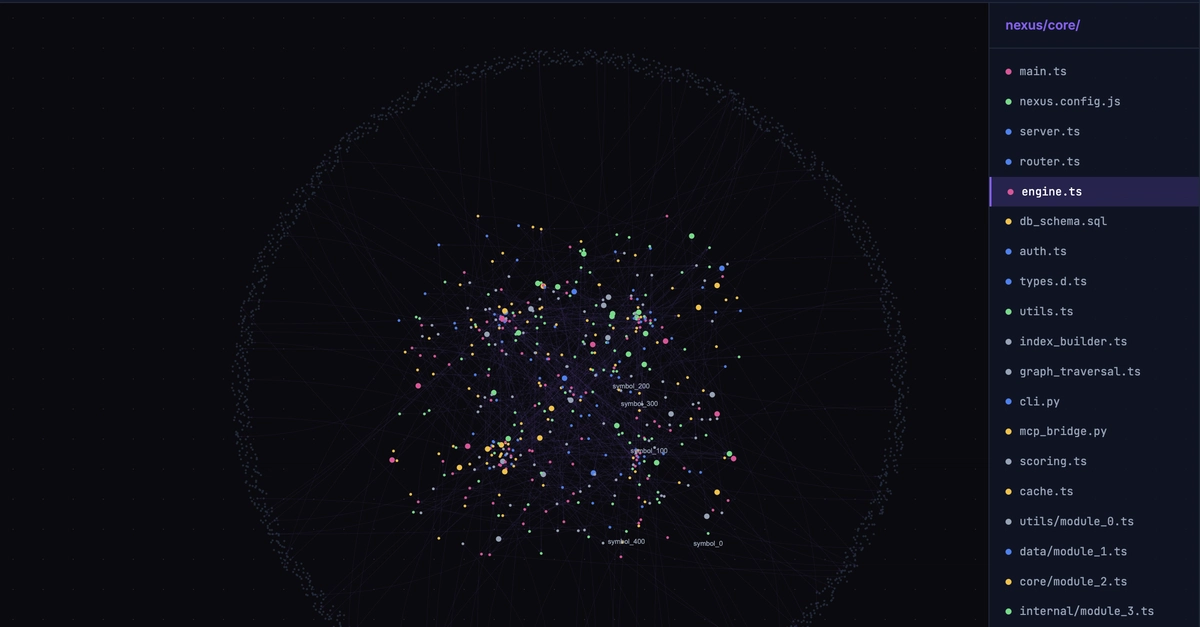

ByteDance has packaged this as two complementary projects. First, there’s Agent TARS. This is the developer-facing side. It brings that visual understanding into the terminal. Think of it as the brain that can interpret what it sees on a screen and translate that into actions. It’s designed to be plug-and-play, with commands that look almost deceptively simple.

# No installation needed — run directly with npx

npx @agent-tars/cli@latest

# Specify a model provider (defaults to Doubao; Claude also supported)

npx @agent-tars/cli@latest --model claude-opus-4-6

# Launch with Web UI (visual interface)

npx @agent-tars/cli@latest --ui

# Start with a specific task

npx @agent-tars/cli@latest -p "Search for today's AI news and summarize the key points"

Then you have UI-TARS Desktop. This is the actual native application that executes those actions on your local machine. It’s the hands that move the mouse and type on the keyboard. It’s the piece that turns abstract commands into tangible desktop interactions. Getting it running involves cloning the repo and installing dependencies, or, thankfully, downloading pre-built installers.

# Clone the repository (monorepo structure)

git clone https://github.com/bytedance/UI-TARS-desktop.git

cd UI-TARS-desktop

# Install dependencies

pnpm install

# Launch UI-TARS Desktop

pnpm run dev:desktop

# Or download pre-built installers from the Releases page:

# - macOS: UI-TARS-Desktop

The Tech Behind the Magic (Or So They Claim)

The wizards at ByteDance AI Research, leveraging their Seed series of VLMs, have apparently trained models specifically for this GUI understanding and control gig. They’re talking about a “hybrid browser agent strategy” that combines GUI, DOM (Document Object Model), and some hybrid approach. The goal seems to be getting the best of all worlds: understanding the visual layout, knowing the underlying code structure of web pages, and having a flexible intermediary.

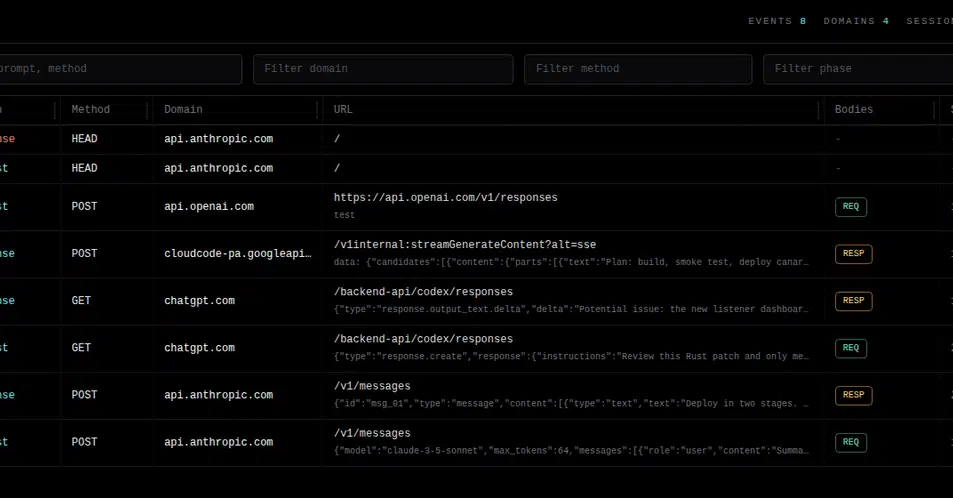

And the “Event Stream architecture”? Apparently, that’s what gives the system precise UI feedback and debuggability. Because if an AI is going to be fiddling with your desktop, you’d better be able to see what it’s doing and why it’s failing. They claim it’s SOTA (state-of-the-art) on multiple GUI agent benchmarks. We’ll see.

Why Should You Care? (Besides the 32k Stars)

This isn’t just for developers tinkering with new AI toys. The potential use cases are actually pretty compelling, if they pan out.

- Cross-Application Workflow Automation: Moving data between apps without a single API call. Imagine automating that tedious Excel-to-ERP data entry.

- Intelligent Browser Control: Beyond simple web scraping. Automating complex, multi-step processes on websites, even ones that require logins.

- GUI Software Testing: Describing test cases in plain English, and having the AI run them. No more wrestling with flaky XPath locators.

- Personal Productivity Assistant: Setting up your AI to handle file organization, batch photo edits, or summarizing long documents directly on your machine.

- Accessibility Assistance: This one’s interesting. Providing voice control over a computer for people with disabilities, going beyond what current assistive tech offers.

The real question, as always with these shiny new open-source projects, is who’s making money and who benefits long-term. ByteDance is obviously investing heavily in AI, and releasing powerful tools under an Apache-2.0 license makes them a major player in the AI developer ecosystem. They get mindshare, developer adoption, and potentially feedback that refines their own internal models. For us users, if it works as advertised, it promises to democratize automation for tasks that were previously locked behind technical barriers or prohibitively expensive RPA licenses.

But here’s my unique insight: we’ve seen this play before, just with different tech. Think back to the early days of web scraping tools. They promised to unlock data from websites. Then came the RPA wave, promising to unlock business processes. UI-TARS-Desktop is the next iteration, promising to unlock the desktop itself. The real innovation here, beyond the tech, is ByteDance’s willingness to tackle the clunky, visual, human-centric interface that has been the bane of automation for decades. It’s a bet that AI’s visual understanding has finally caught up to the chaos of a typical desktop.

Will it live up to the 32,300+ stars? That’s the million-dollar question. But the direction it points is clear: AI is no longer just about code and servers. It’s coming for your desktop.