Seven days into the month, a developer found themselves staring at a bill that had already consumed 75% of their AI API budget. Nothing had changed in their workflow — same codebase, same questions, same established tools. Yet, the token meter was spinning wildly, as if they’d left a digital garden hose running at full blast.

This wasn’t a case of runaway prompt engineering; the real culprit lay in the very nature of context feeding. When you ask an AI like Claude or GPT, “how does my auth middleware work?”, many existing tools resort to stuffing entire files — auth.ts, sometimes even its dependencies — into the prompt. We’re talking 300 to 800 lines of code, when often, only a few dozen are actually relevant. This is what can be aptly termed the Confusion Tax — paying for tokens that actively degrade AI performance, introducing noise, fostering hallucinations, and inflating costs.

Traditional Retrieval-Augmented Generation (RAG) approaches treat code as mere text, a flat document. They fail to grasp the inherent structure: that validateToken() calls checkExpiry(), which in turn imports from crypto/utils.ts. This graph-like relationship, where functions call, extend, and import from each other, exists whether the tooling acknowledges it or not.

To intelligently answer “how does the auth middleware work?”, one doesn’t need the entire file. What’s required is a focused set: the authMiddleware function’s body, the immediate functions it calls, and any dependent types or interfaces. This constitutes a k-step neighborhood traversal from an anchor node in the code graph, a far cry from a brute-force file dump.

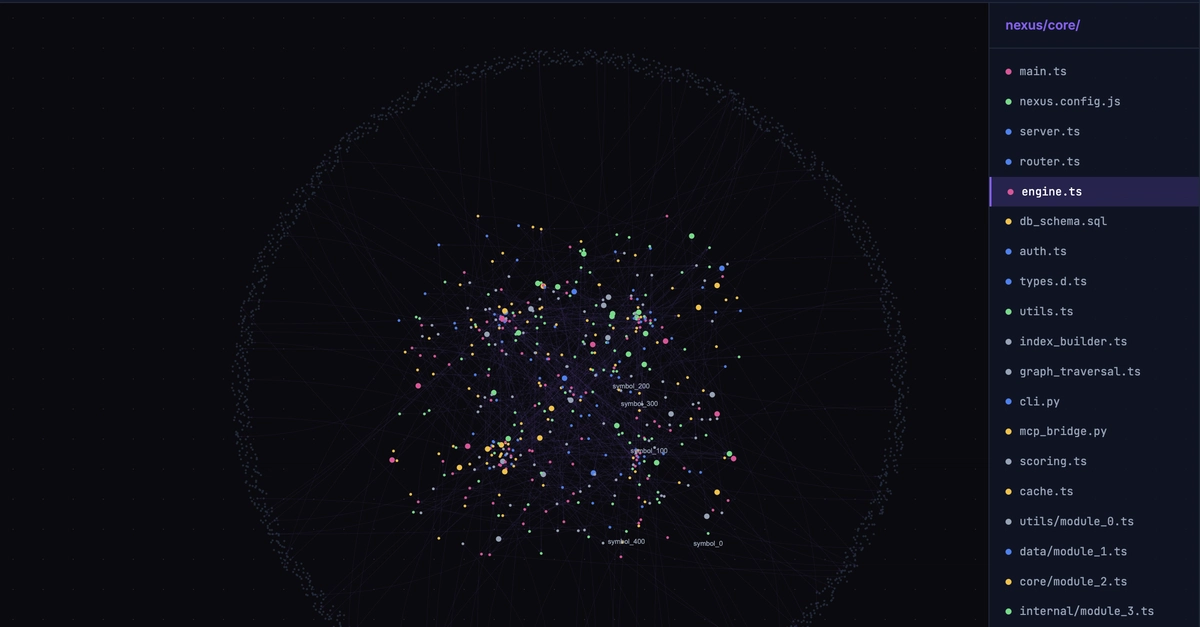

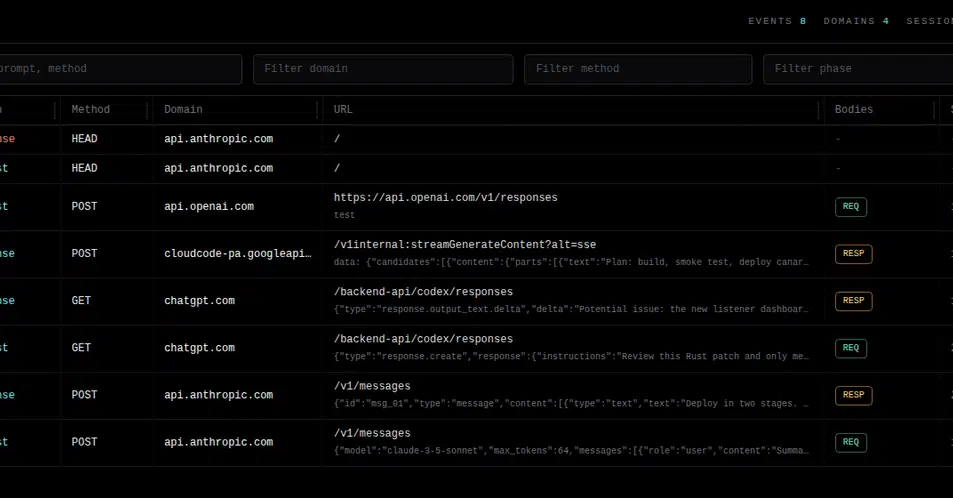

Nexus-Graph, a new open-source entrant, positions itself as a local-first code intelligence engine designed to parse codebases into a directed symbol graph. It then serves precisely budgeted context to AI assistants through the Model Context Protocol (MCP), a standard understood by leading models like Claude Code, Cursor, and Gemini.

The Nexus-Graph Approach: Building a Smarter Context Engine

Supporting Python, TypeScript, and JavaScript out of the box, Nexus-Graph use tree-sitter for parsing, ensuring a deep structural understanding of code beyond simple lexical analysis. The process is elegantly broken down into three stages.

First, Indexing. Nexus meticulously walks a project, parsing every relevant file (.py, .ts, .tsx, .js, .jsx) into a graph of symbols (functions, classes, variables, etc.) and their relationships (imports, calls, extensions). This graph is stored efficiently in SQLite, utilizing tables for symbols and edges that map these connections.

-- Symbols: functions, classes, methods, variables, interfaces

CREATE TABLE symbols (

id TEXT PRIMARY KEY, -- sha1(file:name:line)

symbol_name TEXT,

symbol_type TEXT,

file_path TEXT,

start_line INTEGER,

end_line INTEGER,

signature TEXT,

body_hash TEXT,

edit_count INTEGER

);

-- Directed edges between symbols

CREATE TABLE edges (

from_id TEXT,

to_id TEXT,

edge_type TEXT -- imports | calls | extends | implements

);

Second, Query. When an AI assistant requests context, Nexus intelligently identifies anchor symbols via full-text search. It then performs a Breadth-First Search (BFS) traversal of the graph, typically up to k steps away from these anchors. Nodes are scored based on proximity and recency, with recently edited files receiving higher weight. The system then greedily fills a token budget, prioritizing full function bodies, then definitions, and finally pruning less critical leaf nodes.

Third, Serve. The curated results are delivered to the AI via the MCP. This protocol is the lingua franca for code context, enabling native integration with tools that developers are already adopting.

Quantifiable Wins: Beyond the Hype

The switch to graph-based retrieval has yielded demonstrably impressive results. Developers report a 70% reduction in tokens per query compared to traditional whole-file dumps, leading to 5-10x smaller context blocks. The indexing process itself is remarkably swift, with Nexus capable of indexing over 1,000 files in under 15 seconds on a standard M1 MacBook Air. Context queries, crucial for real-time AI assistance, complete in under 100 milliseconds on an indexed project.

Installation is straightforward, with global npm package availability:

# Install globally

npm install -g @costline/nexus-graph

# Index your project

nexus-graph index --project .

# Start the MCP server

nexus-graph server --project .

Integration with tools like Claude Code is equally simple, often requiring a minor configuration update in ~/.claude/settings.json to point to the running Nexus MCP server. This allows Claude to automatically invoke get_context_for_query before responding to codebase-related questions.

Furthermore, Nexus includes a file watcher (--watch flag) that supports incremental re-indexing. This means that after edits, only the affected symbols and edges are updated in real time, eliminating the need for a full project re-index, a significant boon for developer productivity.

Why Does This Matter for the Future of AI Dev Tools?

Nexus-Graph’s MIT license and GitHub availability democratize access to this advanced code intelligence. The core issue it addresses — inefficient context management — is foundational to the practical application of AI in software development. For too long, the industry has accepted a bloated, token-hungry approach, mistaking simple document retrieval for genuine code understanding. This new paradigm, built on the inherent graph structure of code, represents a maturation of AI development tools.

It’s a clear signal that brute force token consumption is not the only, nor the best, path forward. The future of AI-assisted coding hinges on smarter, more efficient data retrieval. Nexus-Graph doesn’t just offer a cost-saving mechanism; it points to a more intelligent, precise, and ultimately more effective way for developers to collaborate with AI assistants on complex codebases.

🧬 Related Insights

- Read more: An AI on a 2014 MacBook Built a WeChat Pre-Sale Beast – Here’s the Code That Made It Tick

- Read more: Amazon S3 at 20: Beyond the API, a Foundational Shift

Frequently Asked Questions

What is the “Confusion Tax”? The “Confusion Tax” refers to the unnecessary AI API costs incurred when tools provide excessive, irrelevant code context to AI assistants. This noise can degrade AI performance, leading to hallucinations and higher bills, rather than providing targeted, useful information.

How does Nexus-Graph understand code better than traditional methods? Instead of treating code as flat text, Nexus-Graph parses it into a directed symbol graph. This allows it to understand relationships between functions, classes, and modules (e.g., which function calls another), enabling it to retrieve only the most relevant code snippets for AI queries.

Is Nexus-Graph free to use? Yes, Nexus-Graph is open-source and MIT licensed, meaning it’s free to use for both personal and commercial projects.