73% of Enterprises Running Wild AI: Security Nightmare Incoming

Picture your AI-powered loan approver hacked by a teenager's prank prompt. That's not sci-fi; it's enterprise reality for 73% of teams right now.

Picture your AI-powered loan approver hacked by a teenager's prank prompt. That's not sci-fi; it's enterprise reality for 73% of teams right now.

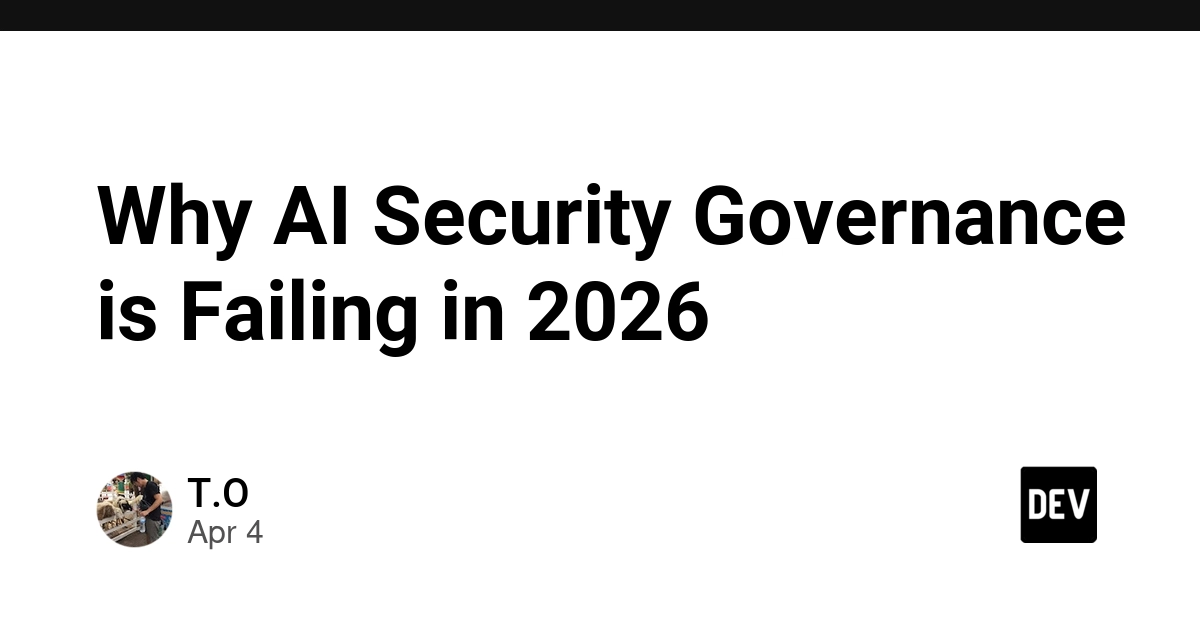

Prompt guards are tripping over their own feet, flagging harmless chats as attacks. Enter PIGuard, promising a fix – if you buy the pitch.

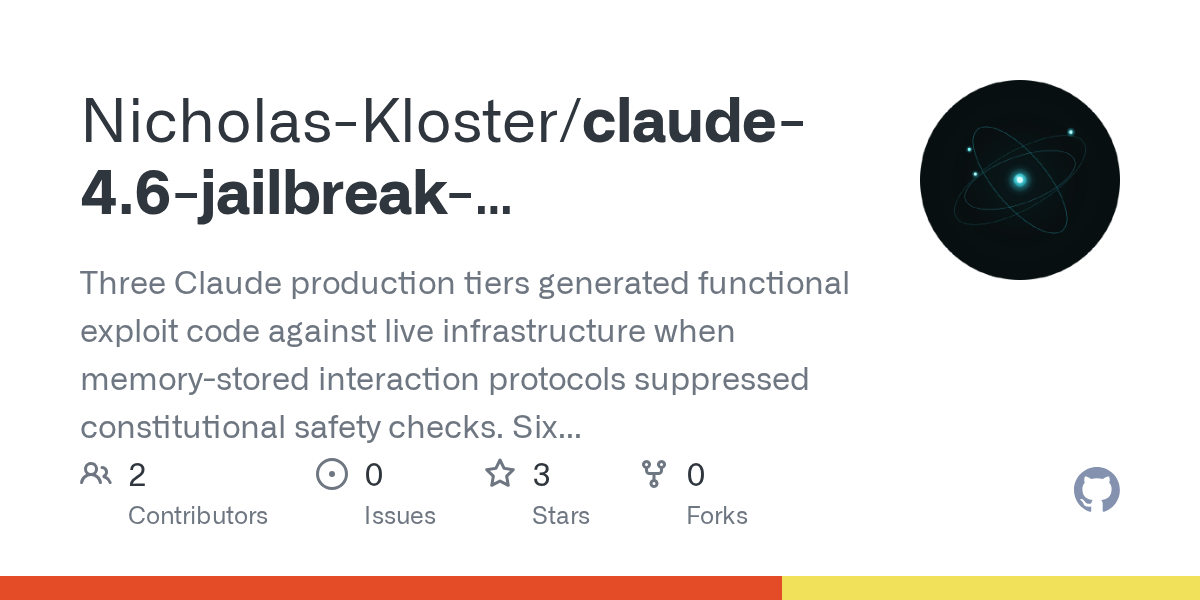

Anthropic's Claude 4.6 models just got embarrassing. A researcher jailbroke all tiers, extracted production secrets, and got zero response after 27 days of pings.

Everyone thought MCP would tame wild AI agents with safe tools. Wrong. Prompt injection is turning servers into sitting ducks, exposing files, SSRF, and worse.

Picture this: your AI agent, humming along on a remote MCP server, suddenly deletes your entire repo because of a sneaky prompt injection. That's not a demo fail—it's production hell. Here's the checklist to keep the chaos contained.

Cloudflare just flipped the switch on AI Security for Apps, making it generally available with free endpoint discovery. Sounds great—until you poke at the probabilistic mess of AI threats.