Databases & Backend

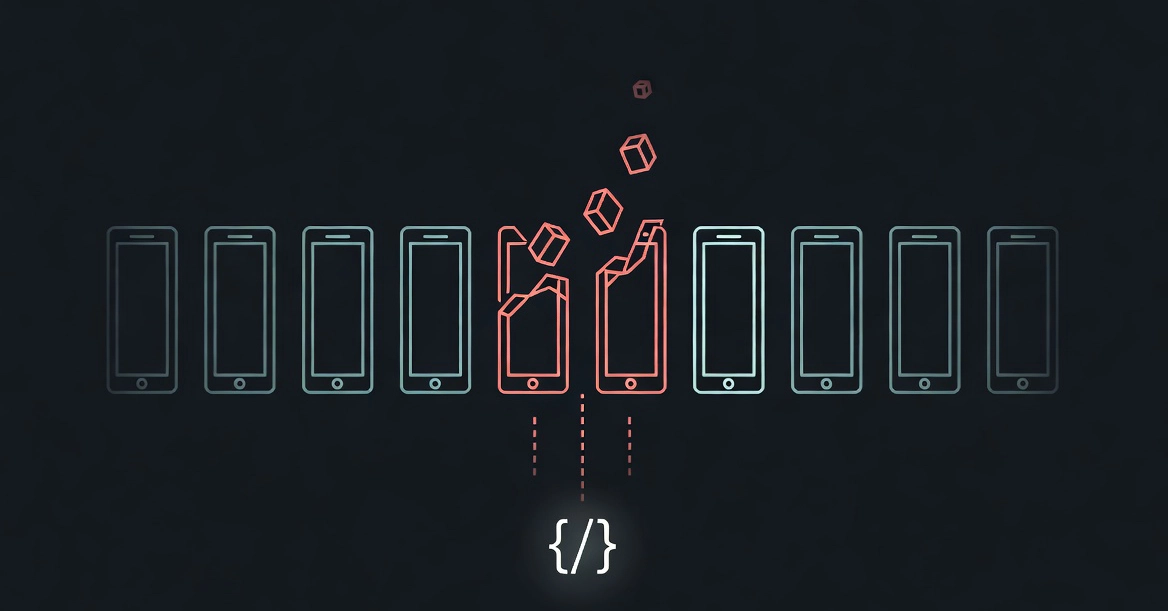

I Fed Fake System Commands to 10 LLMs—Three Betrayed Their Secrets

Five lines of XML in a chat. Seven LLMs shrugged it off. Three? They dumped their guts in JSON. Prompt injection isn't theory—it's here, and it's wild.