JGuardrails: Java's New Shield Against LLM Prompt Injection Mayhem

Your LLM app's humming along—until some troll types 'ignore previous instructions' and your banking bot starts teaching lockpicking. Enter JGuardrails, the Java library that's finally putting rails where system prompts fail.

⚡ Key Takeaways

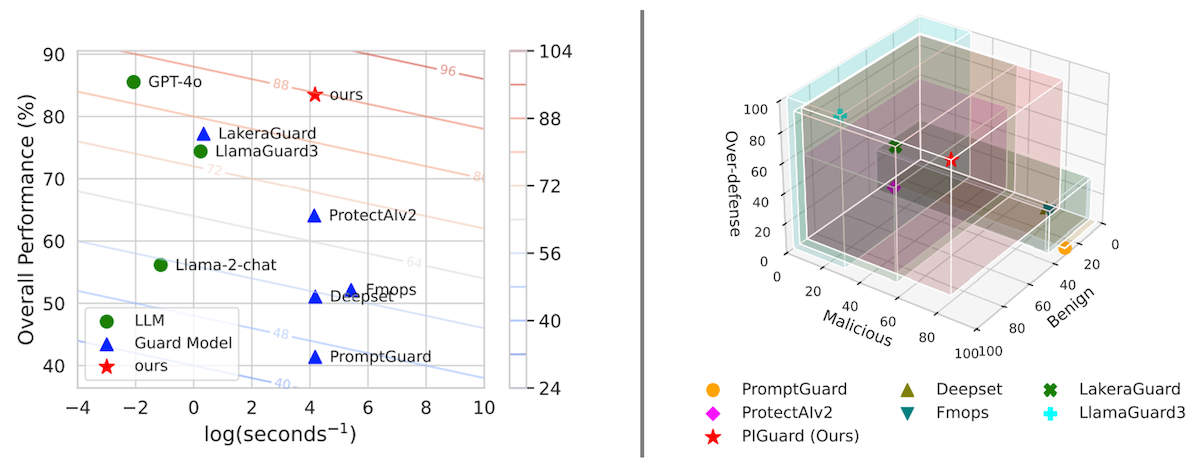

- JGuardrails enforces LLM safety in Java via composable input/output rails—far beyond system prompts. 𝕏

- Blocks prompt injection, PII leaks, toxic output, invalid JSON with pattern-based detectors. 𝕏

- Framework-agnostic; integrates Spring AI/LangChain4j easily, but patterns aren't foolproof—evolve or die. 𝕏

Worth sharing?

Get the best Developer Tools stories of the week in your inbox — no noise, no spam.

Originally reported by dev.to