Everyone expected more features, more polish, more growth hacks. Instead, a simple dive into the database revealed something far more fundamental: the plumbing itself was fractured. For indie SaaS operators, the siren song of building is often louder than the quiet drip of revenue loss. This isn’t about a flashy new framework or a complex algorithm; it’s about the bedrock of data integrity, and how easily it can erode.

Look, the conventional wisdom for bootstrapping a SaaS often boils down to relentless feature development and aggressive marketing. You build, you iterate, you push updates, you spend hours crafting the perfect onboarding flow. The assumption is that the core mechanics — billing, cancellations, user status — are humming along reliably in the background. But what happens when the background isn’t humming at all? What happens when it’s quietly weeping revenue into the ether?

This is precisely the scenario a solo founder, running a tiny aviation SaaS with $45 MRR and a staggering 75% churn rate, stumbled upon. In a mere 45 minutes, a shift from coding new features to interrogating existing data unearthed three deeply insidious bugs. Bugs that weren’t crashing the application, but were systematically bleeding cash.

The Phantom Winback Campaign

The first issue surfaced with a winback cron job designed to re-engage canceled subscribers. The intention: send emails on days 7, 21, and 45 post-cancellation. The mechanism: a Supabase query fetching subscriptions with status='canceled' and an updated_at timestamp within the last 60 days. Simple enough, right? Except the updated_at column was the wrong one entirely. The correct column, the one that actually marked the moment of cancellation, was canceled_at.

When the Supabase JS client hit this discrepancy, it didn’t throw a loud, attention-grabbing error. No, it returned a polite, { error: 'column does not exist' }. The cron, designed to be robustly silent, caught this error and, crucially, returned an empty array. Consequently, the job ran daily for weeks, logging ‘0 candidates, 0 emails sent, exit 0’. No alarms, no alerts, just a quiet, consistent failure.

The meta-lesson here is profound: when your code encounters an error, especially in automation flows where silent failure is the norm, the default response should not be to gracefully return an empty set. It should be to log the damn error first. Because in marketing automation, silent failure doesn’t result in bounces; it results in customers you never hear from again, customers who simply disappear into a void of uncommunicated offers.

The Ghost of Cancellations Past

Compounding this, the investigation revealed 14 rows in the subscriptions table marked as 'canceled' but with a canceled_at timestamp of NULL. Stripe, the payment processor, had the accurate cancellation date. My system, however, did not. The culprit? A subtly flawed webhook handler for customer.subscription.deleted.

The code snippet shows the problem: the canceled_at timestamp was tucked away inside a try block, alongside other ‘side effects’. If any of those other operations failed — which, apparently, one or more of them did — the entire try block would abort. The initial update setting the status to 'canceled' would run, but the crucial canceled_at timestamp, and thus the record of when the customer left, would be lost. This meant even if the winback cron was fixed, it would have found zero historical candidates because the filtering column was NULL across the board.

The webhook just never stored it.

The fix was deceptively simple: move the canceled_at timestamp to an unconditional update, ensuring it’s always set when a subscription is marked as canceled. Then, a manual backfill from Stripe’s API corrected the historical data. It’s a potent reminder that denormalized convenience can easily become a liability when not handled with extreme care.

The Zombie Profile Problem

This third bug directly built on the second. An abandoned checkout recovery cron job was designed to email users who had started a checkout but hadn’t completed it. It pulled Stripe sessions marked as 'expired' and then attempted to filter out users who had already converted, checking profiles.subscription_tier != 'free'. The intention was sound: don’t bother folks who are already paying customers.

However, due to the webhook bug mentioned previously, 51 premium profiles in the database were not correctly downgraded to 'free' upon cancellation. These were essentially ‘zombie’ profiles. When a new, genuinely abandoned checkout user shared an email address associated with one of these lingering premium profiles, the cron would silently skip them. The output: {"total_abandoned": 11, "sent": 0, "skipped": 11}. The system looked like it was working, reporting zero abandonments when, in reality, it was failing to engage a significant chunk of potentially valuable leads.

The fix? Shift the ‘already paid’ determination from the denormalized profiles.subscription_tier to the source of truth: subscriptions.status='active'. The subscriptions table, being directly tied to Stripe, is inherently more reliable for this kind of state management.

The High-use Thirty Minutes

The immediate results of this 45-minute deep dive were tangible: 8 winback emails sent, 11 abandoned checkout emails poised to be sent on the next run, and 15 historical timestamps corrected. With the WINBACK60 coupon in play (60% off for 3 months), even two conversions would represent a 66% MRR growth for this tiny SaaS. This is the power of addressing foundational issues.

While others were busy writing new code, adding more features, and crafting more email variants, this founder spent a short burst of time interrogating their own data. The lesson is stark: sometimes, the most impactful development work isn’t about building new things, but about ensuring the existing foundations aren’t actively undermining your efforts. SQL-querying your own database, and reconciling that data against your payment processor, is often the highest-use activity an indie developer can undertake.

For any small SaaS operator, running a simple SELECT status, COUNT(*) FROM subscriptions GROUP BY status and comparing it against your Stripe dashboard is not just good practice; it’s a critical check that can prevent silent revenue leaks before they become existential threats.

Why Does This Matter for Developers?

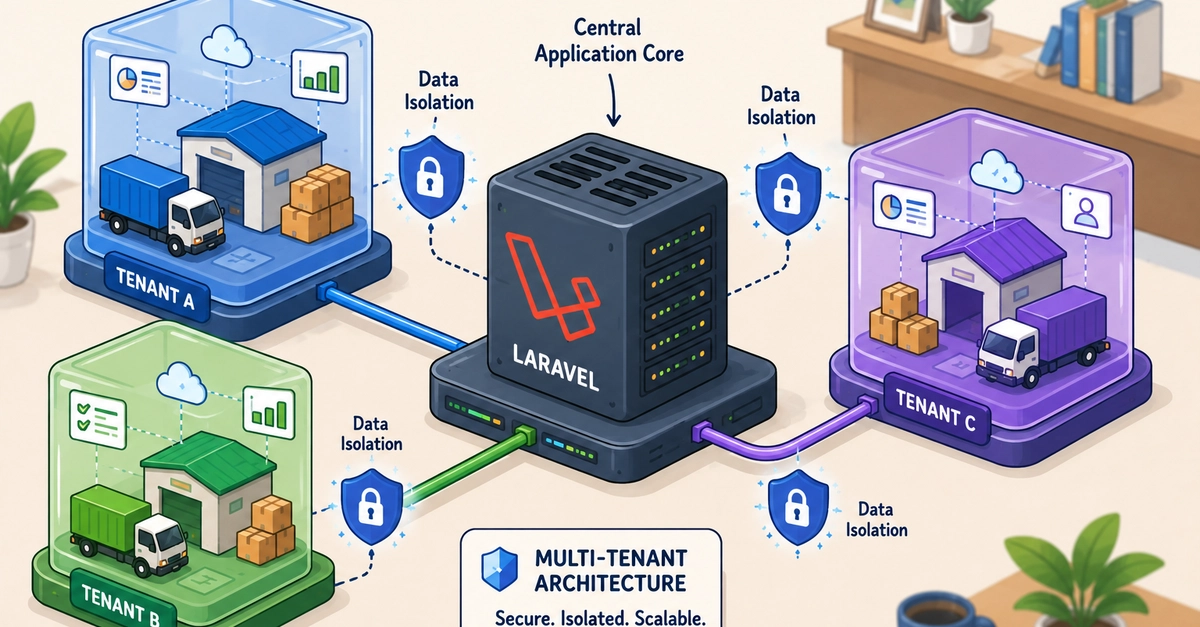

This isn’t just an issue for solo founders; it’s a cautionary tale for engineering teams of all sizes. The pressure to deliver new features can often overshadow the critical need for strong data integrity and reliable background processes. When error handling is lax, or when data models become overly denormalized without rigorous validation, the potential for silent, revenue-impacting bugs increases exponentially. Developers must champion rigorous error logging, meticulous data synchronization, and a healthy skepticism towards convenience features that might drift from the source of truth. The architecture might look good on paper, but if the data pipeline has holes, the whole structure is compromised.

What Went Wrong Technically?

The core technical failures stemmed from a few key areas:

- Inadequate Error Handling: Cron jobs and webhooks were failing silently, not logging critical errors that would have immediately flagged issues.

- Incorrect Column Usage: The winback cron mistakenly used

updated_atinstead ofcanceled_atfor filtering. - Risky

try-catchImplementation: Placing critical data updates withintryblocks alongside other operations meant that failure in any one part could abort the entire update, leaving data in an inconsistent state. - Denormalization Drift: Relying on denormalized

profiles.subscription_tierfor crucial logic, which had become out of sync with the actualsubscriptionsstatus due to the webhook bug.

These aren’t exotic problems; they’re common pitfalls that can be avoided with disciplined engineering practices, focused code reviews, and a commitment to understanding the full data flow from ingestion to processing.

🧬 Related Insights

- Read more: Temp Email: Dev Workflow Essential or Overhyped Hack?

- Read more: Solo Founder Deploys 18 SEO Landing Pages in 9 Hours: The Tactical Playbook

Frequently Asked Questions

Will this replace my job? No. This article highlights critical data integrity and error handling issues common in SaaS development. The human element of identifying, diagnosing, and fixing these complex, context-dependent bugs remains essential.

What does ‘silent revenue-leaking bug’ mean? It means a bug that causes the business to lose money or potential revenue without throwing obvious errors or alerts. The system appears to be running, but critical money-making or money-retaining functions are failing in the background.

How can I implement better error logging for my cron jobs? Ensure your cron jobs and background tasks explicitly log any errors encountered during execution, including error messages, relevant context (like user IDs or timestamps), and the specific operation that failed. This log data should be monitored or have alerts set up.