[Model Armor] Blocks AI Attacks Before They Hit GKE Models

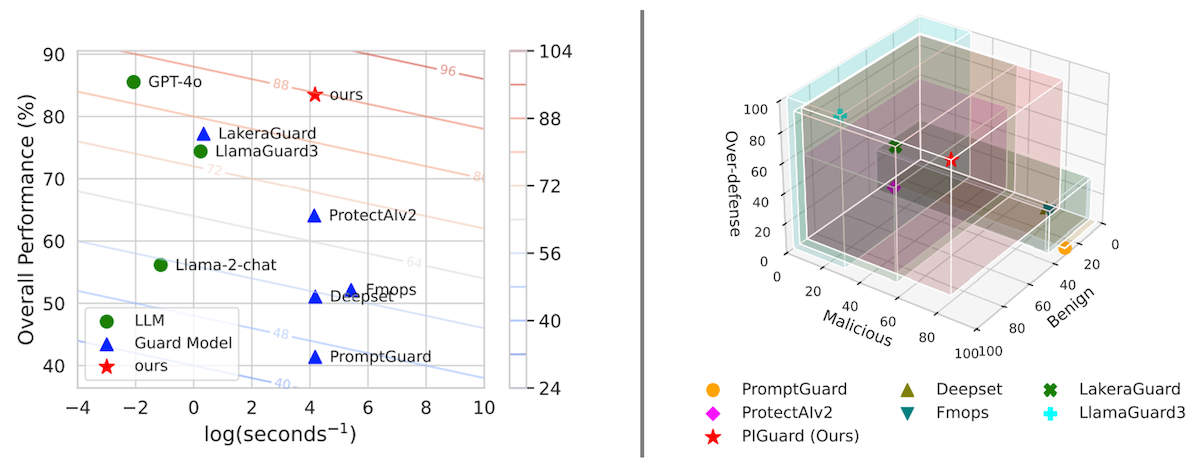

Enterprises rushing AI to production on GKE face a nasty reality: models leak secrets and fall to prompt hacks. Model Armor steps in as the invisible shield, scanning inputs and outputs at wire speed.

⚡ Key Takeaways

Worth sharing?

Get the best Developer Tools stories of the week in your inbox — no noise, no spam.

Originally reported by Google Cloud Blog